Multimodal interaction with humanoid robots

Context

This report summarizes the work done during an internship for SoftBank Robotics Europe (SBRE).

The purpose of this internship was to design and build demo applications showcasing the features of Pepper. We also wanted to collaborate with SBRE's Developer Experience team, and use the knowledge acquired while building these apps to produce guidelines for developing on Pepper.

Pepper is a humanoid robot which uses verbal communication, gestures and a tablet mounted to its chest to interact with humans. Applications developed for Pepper natively run on android, however developers can use the qiSDK to exploit robot specific features (voice, gestures, shoulder lights).

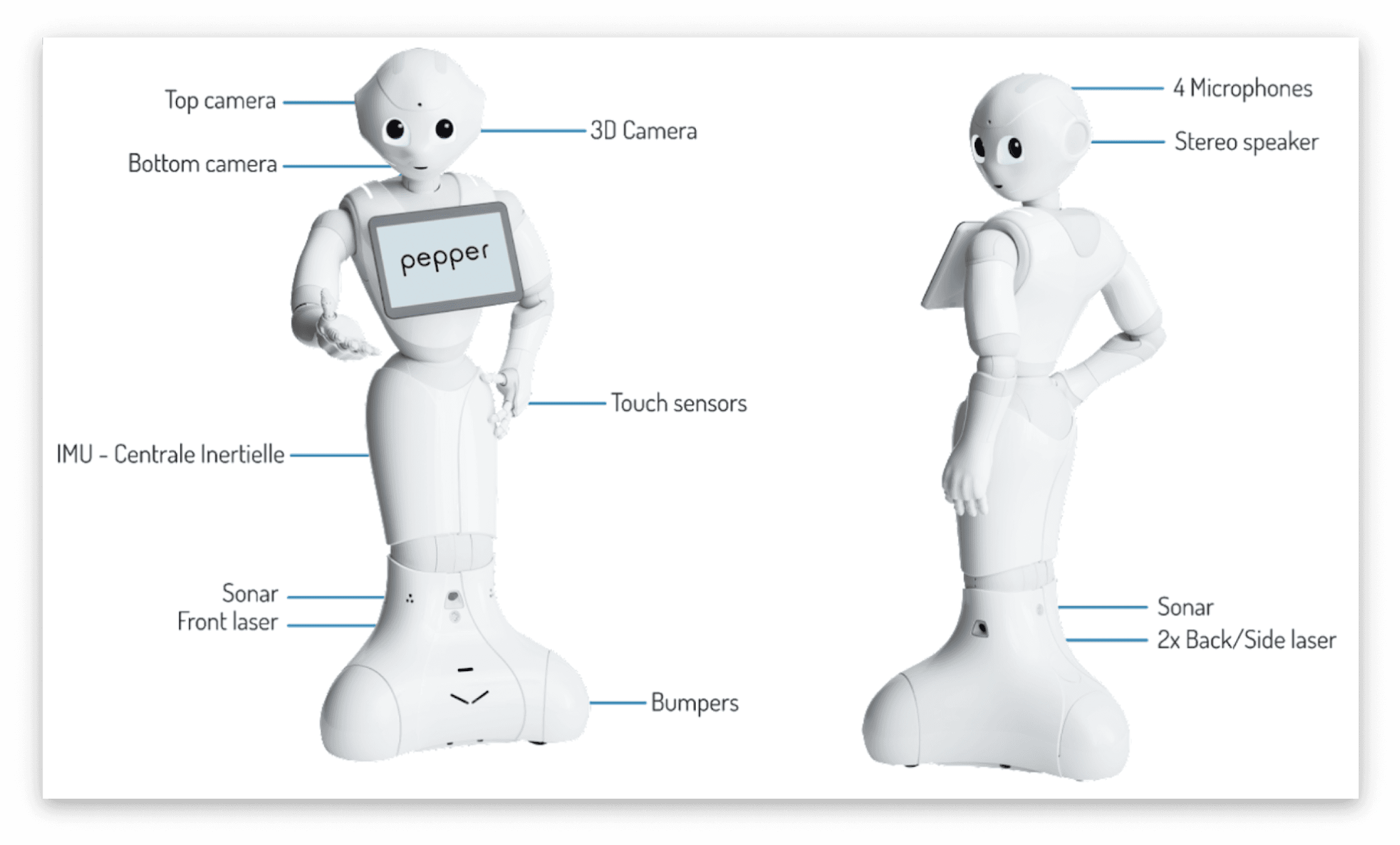

Pepper hardware:

Design Goals

Create experiences that encourage natural language interactions, Ideally the tablet should only serve as a support for conversations.

Help users understand what the robot can and can't do, it's very likely that every user will be interacting with Pepper for the first time.

Design Tablet UI specifically for Pepper's use cases, The tablet is far away from the user, and the robot can move during a conversation.

Make sure that users can use the tablet as a backup in loud environments.

Extract valuable information and write key takeaways for Developers.

Work overview

All the resources presented here come from the Retail demo, one of the applications developed during the internship. Pepper for retail was one of the main segments the sales team wanted to focus on. The application was used during events (e.g. Vivatech) to showcase the robot.

Natural communication

Pepper's applications should be designed for natural language interaction, it is however very challenging for Pepper to handle open ended conversations. This limitation was one of the main drivers for us to use conversation flows to build the retail demo application. We wanted to maximize times when Pepper led the conversation to limit openendeness.

The conversation flows contain voice lines from Pepper and potential answers from the user. Once validated, these flows were used to create chatbots with QiChat, SoftBank's own chatbot engine.

Here is one of the flows from the retail demo application, Pepper lines are in blue. See flow in Figma

Tablette interface

The conversation flows were used to determine the supporting content displayed on the tablet. This content would either display some information that cannot be communicated by voice (e.g. picture of a product) or give hints to continue the conversation.

Throughout the whole UI design process, it was essential to keep in mind that Pepper's tablet is different from any other tablet, the robot can move while it speaks and the screen is rather far away from the user, this creates unique design challenges. We'll use the screens of the retail demo app to show how we addressed these challenges.

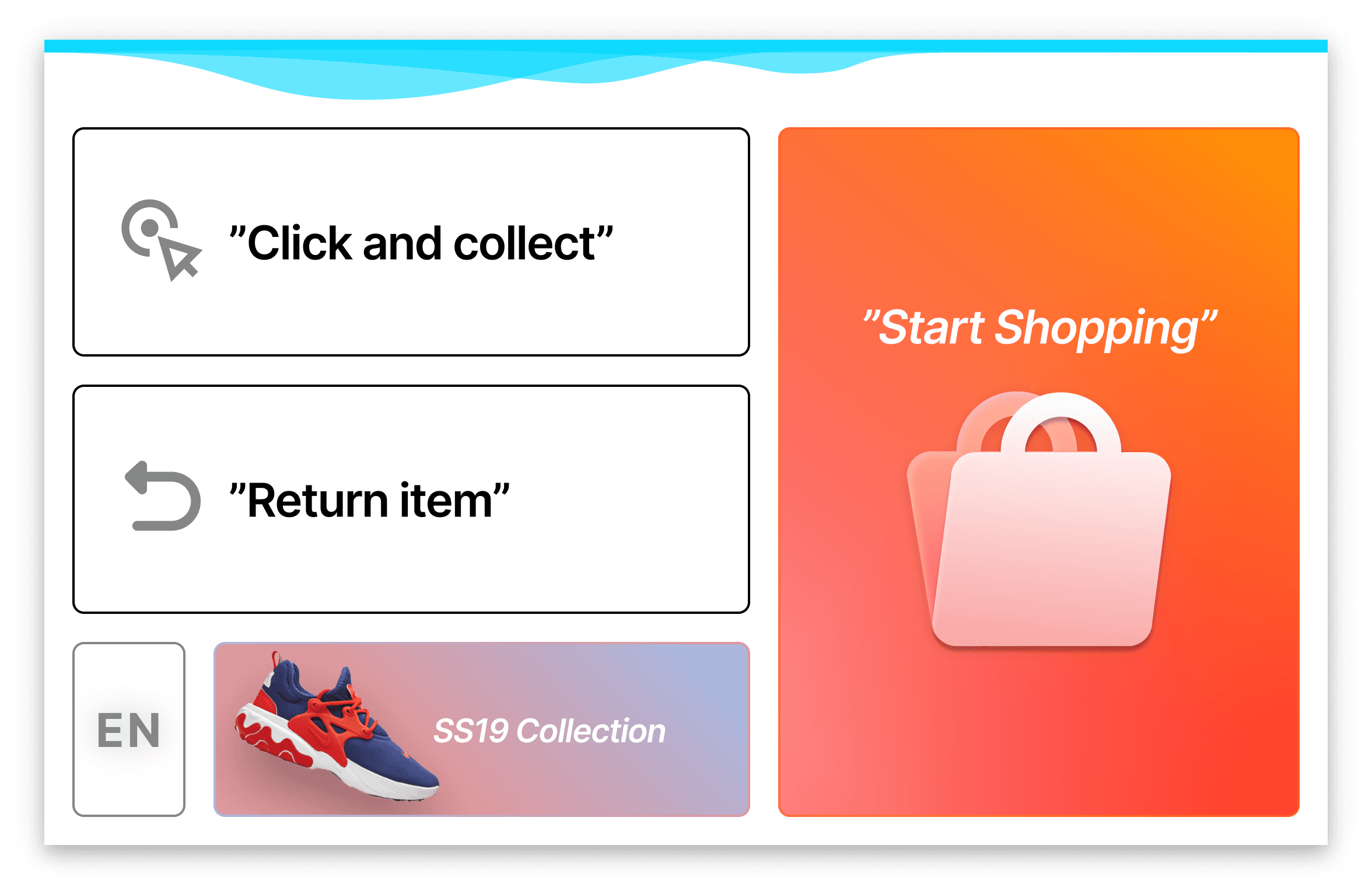

Main screen of retail demo

This main screen illustrates the size of the Touch targets, these are enormous as they need to be easy to touch from afar. The reasoning for having a huge font size is identical, we need text to be readable from about one and a half meters. The quotes around the text are there to signify that users can use their voice to say the sentence as opposed to just touching on the tablet.

Note: We previously had speech bubbles icons to signify that the text of a button could be said out loud, however during usability testing, we found that these turned out not to be understood by our users, the bubbles were not readable, And making them bigger would have taken too much space on the 10" screen.

Note: the cyan top bar that you see on every screen is part of the qiSDK and cannot be modified, it is there to signal to the user that Pepper is listening, and to display the sentence that the robot understood.

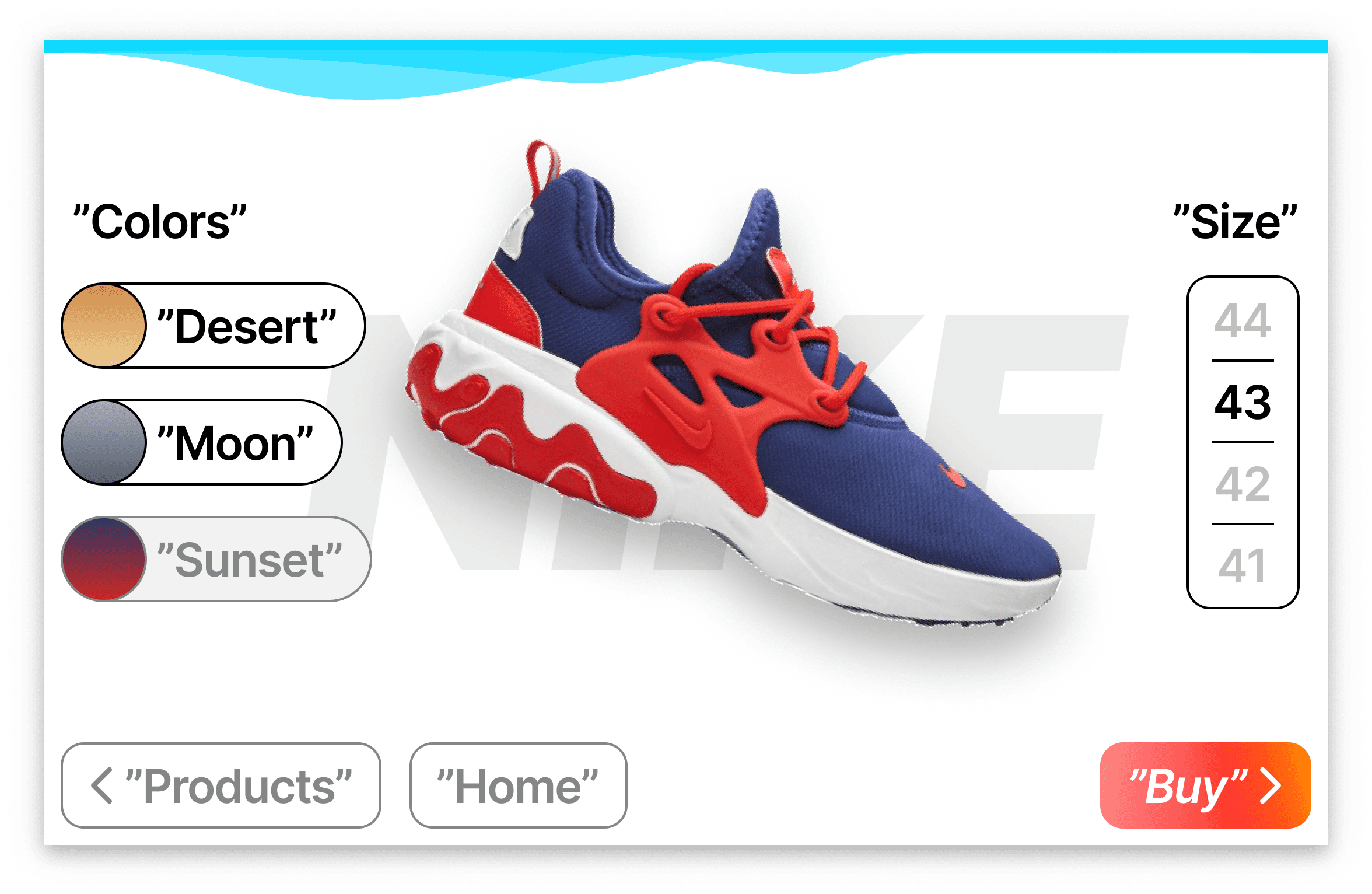

Product display screen

Here is another screenshot displaying a product. This part of the application was used to show what kind of information could be displayed on the tablet to support the conversation with the robot.

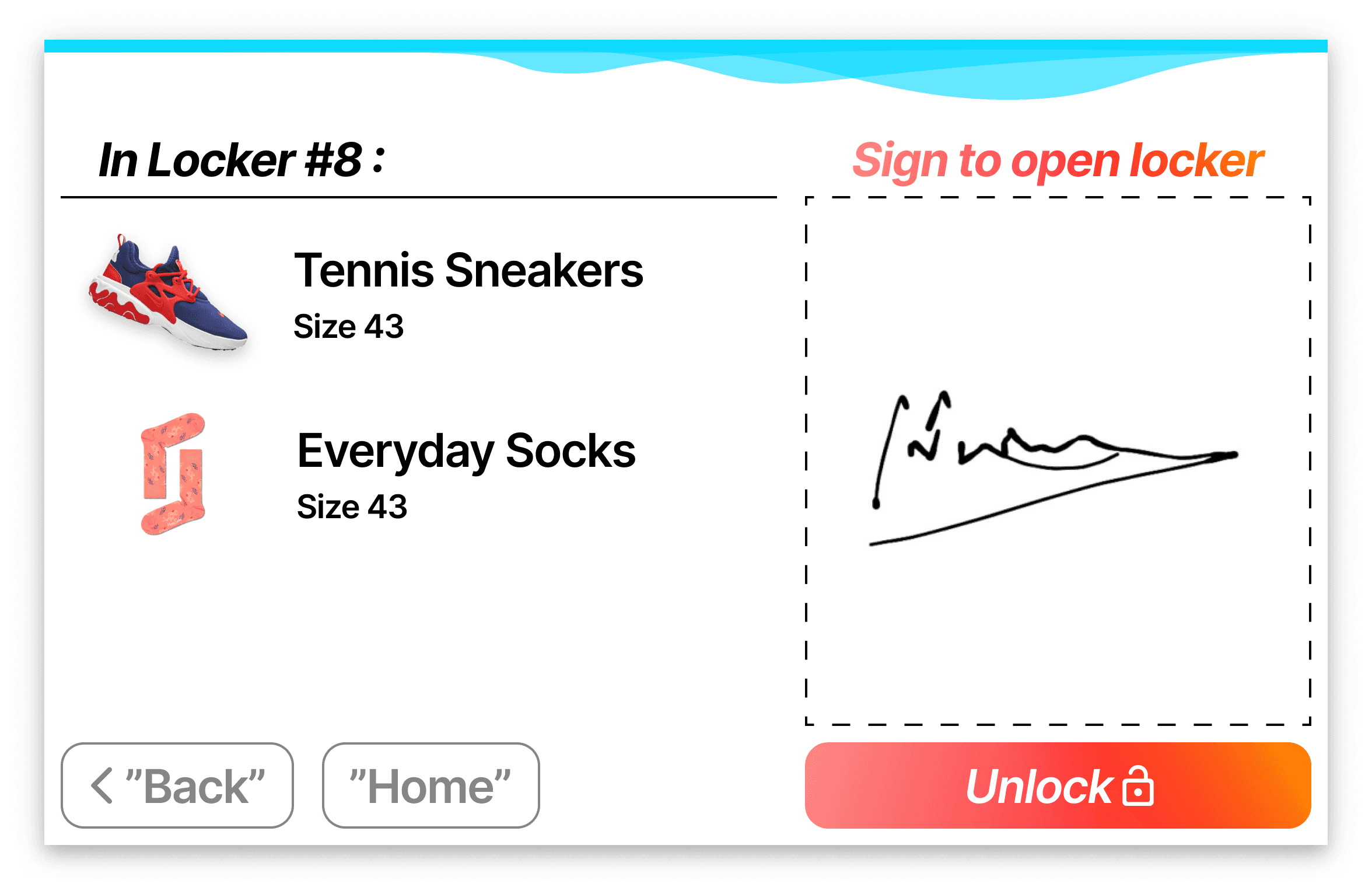

Strong tablet interaction screen

A Final interaction showing how Pepper could enhance the shopping experience, here users are asked to sign on the tablet to complete their click and collect purchase, in theory all interaction can be done on Pepper for such user flows.

Recommendations to developers.

After conducting some user tests, Mainly on interns that were not familiar with Pepper, we wrote some best practices for developing on Pepper. Here are some of the recommendations that we ended up making to Pepper devs.

We recommend a font size of 40 for all main text, 32 can be used for secondary text, in general there should be a big size difference between the two fonts for visibility

Line thickness of 2 points minimum is recommended

We should have a strong emphasis on the "what to say next" actions, they should stand out from the rest of the UI.

The interface should always use a light theme, Interaction with pepper will almost always be during the day.

We are okay with having minimal padding on the side of the screen (14px), Peppers tablet's have big glass bezels that can be seen as padding, however all touch targets should be big and have large (Min 24px) spacing between them.

We should make sure not to lose users that show up mid flow (we cannot always tell when a user leaves) . For this we need to link back to the home page on every screen.

Final notes

Pepper is a peculiar platform, designing for it is very much an exercise in empathy as it is essential to teach every user how to interact with the robot in just a few minutes. Creating applications for this platform was a very enriching exercise from a design perspective.

In hindsight, It would seem like the scope of the internship was rather large for a 6 months project, designing for pepper is extremely complex, mostly due to its uniqueness, I feel like I would have needed a lot more time, perhaps a year, to fully grasp all the specificities of Designing for pepper.

Extending the internship would have allowed me to put together a basic design system instead of a few guidelines. Design systems are abundant online, every company is giving their own twist on very similar components, However; Pepper being such a unique platform, none of these systems would really work for it. These observations make me believe that a purpose built design system for Pepper would make a lot of sense.

Gualtiero Mottola

Aug 2019